Every conversation in enterprise technology right now is about AI. Agents, copilots, generative systems — the excitement is real and the potential is significant. But in the rush toward intelligence, something foundational is being taken for granted: automation.

Automation isn’t competing with AI. It’s the infrastructure that makes AI work in practice. The more autonomous your systems become, the more the underlying structure matters.

Structure is not optional

When AI agents can initiate workflows, approve decisions, and trigger actions downstream, the capability is impressive. But without structure, it’s also unpredictable. Every AI-driven action still needs to happen within a defined framework — with clear boundaries, approval layers, and audit trails that can answer three basic questions: What happened? Why? Who authorized it?

Guardrails aren’t a sign of caution. They’re what allow you to scale with confidence. The more autonomous the system, the more important it is that humans know exactly where control sits and where it doesn’t.

At Maarga, we work with Microsoft’s Power Platform and Copilot to build automation solutions for enterprises. One thing we have seen consistently: the quality of an automated system is often determined before any AI is introduced — by how clearly the underlying process is defined.

The data question runs deeper than clean data

Most people know the basics — bad data gives bad outputs. But there’s a more practical challenge worth naming.

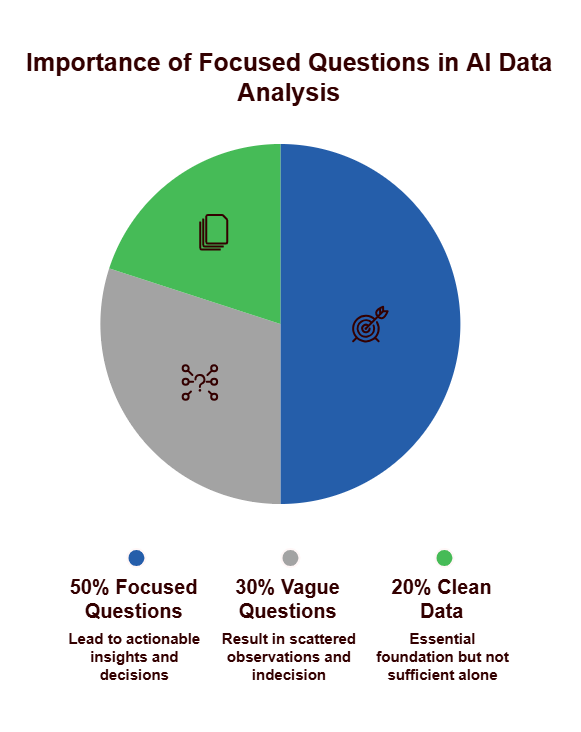

Even with good, well-structured data, AI can generate outputs that go in many directions. AI is very good at finding patterns. But patterns toward what end? Before you let AI work on your data, it helps to be clear about the question you’re trying to answer — the outcome you’re working toward. Without that, you get a lot of interesting observations that don’t add up to a decision.

The lens you bring to your data shapes the intelligence you extract from it. AI follows the question you ask. A vague question gets you scattered results. A focused one gets you something you can act on.

The variable most implementations overlook: human belief

Change management and adoption are known challenges. But there’s something more specific that’s worth calling out.

Think about someone who is skeptical of AI — not loudly, just quietly unconvinced. When they see an AI-generated recommendation or action list, they may look for reasons to dismiss it. They might approve it without really standing behind it, communicate it half-heartedly to their team, or quietly override it without saying so. On paper, the system is being used. In practice, the intelligence isn’t flowing through to action.

This is not a technology problem. It’s a human one. And it doesn’t get solved by improving the model.

When designing systems, the human factor needs to be built into the thinking from the start — not as a training exercise at rollout, but as a genuine design consideration. How will people receive AI outputs? What would make them trust a recommendation enough to act on it and communicate it with conviction? These questions directly affect outcomes — and therefore ROI.

AI-powered precision farming solutions are already delivering measurable results. The study shows that companies like Fasal have demonstrated up to 80% reduction in water usage through smart irrigation systems and helped farmers reduce pest management costs by 18-50% through real-time alerts. Meanwhile, crop monitoring and disease recognition systems are showing approximately 8% productivity gains, while post-harvest optimization through AI-enabled supply chains is reducing spoilage and leading to roughly 7% productivity improvements.

The technology’s impact extends beyond productivity metrics. According to the study, AI solutions currently empower over 15 million farmers, with precision farming tools reducing water and fertilizer usage by approximately 28%. These innovations address both economic and environmental sustainability challenges simultaneously.

Putting it together

AI and automation work best together — but only when the process underneath is solid, the data is being asked the right questions, and the people using the system are genuinely engaged with what it produces.

The enterprises that get the most value from AI won’t necessarily be the fastest to deploy it. They’ll be the ones who build carefully — with structure, with intent, and with an honest understanding of the humans in the loop.